Proven Performance

State-of-the-Art Benchmarks

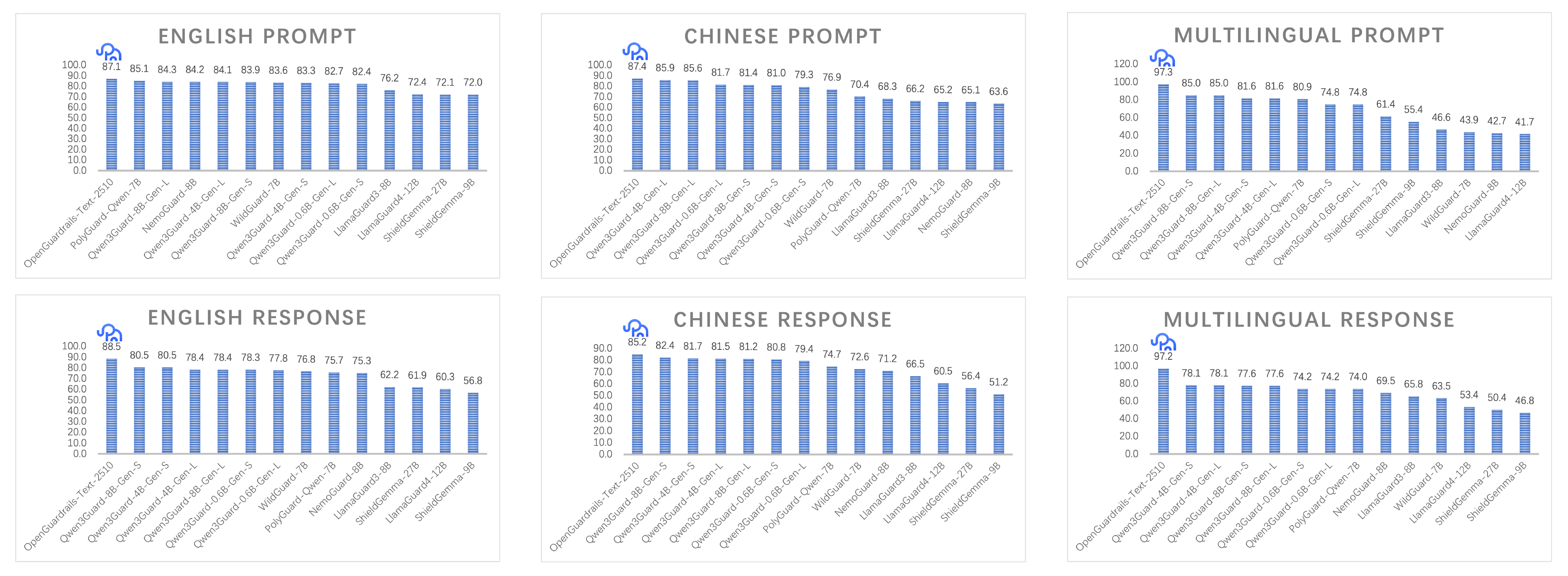

OpenGuardrails achieves SOTA results across multilingual safety benchmarks, outperforming LlamaGuard, Qwen3Guard, and other leading guard models.

Unified LLM Architecture

Single 14B dense model quantized to 3.3B via GPTQ. Handles both content-safety and manipulation detection with superior semantic understanding.

Configurable Policy Adaptation

Dynamic per-request policy with continuous sensitivity thresholds. Tune precision-recall trade-offs in real time via probabilistic logit-space control.

119 Languages

Robust multilingual coverage with SOTA results on English, Chinese, and cross-lingual benchmarks. Includes 97k Chinese safety dataset contribution.

Production Efficiency

P95 latency of 274.6ms with high concurrency. GPTQ quantization enables real-time inference at enterprise scale without sacrificing accuracy.